Digital Transformation: Going Back to Basics

What does “Digital Transformation” actually mean?

The phrase “Digital Transformation” seems to be everywhere these days. A quick Google search yields in excess of 11 million results, which is rather good for something that regularly markets itself to punters as being “niche”.

Attempting to define “Digital Transformation” is a story in its own right; above all else, there is choosing the angle from which to approach it. More than enough has been written on it from the business perspective, but comparatively little attention has been paid to what it really means for my own discipline (software development).

Examining “Digital Transformation” through the lens of software development still does not get us much closer to defining it. Fortunately, there exists a Wikipedia article dedicated to the subject — a fairly reliable rule-of-thumb indicating that something is at least worthy of discussion. The top of the article broadly defines “Digital Transformation” as:

the change associated with the application of digital technology in all aspects of human society

which can:

enable new types of innovation and creativity in a particular domain

Over the years I have heard similar definitions thrown around for phrases like “E-commerce Transformation”, “Cloud Transformation” or “Mobile Transformation”, plus numerous others. So, does this high-level notion of “Digital Transformation” really represent anything new, or is this just another instance of our mammalian brains falling for a clever case of rebranding? My instinct leans towards the latter: humans have a terrible habit of exaggerating anything that feels remotely novel, no matter how minor the difference may be. Unfortunately, we also have a habit of believing our own hype, and as a result we tend to ignore occasions when history repeats itself.

What constitutes real change?

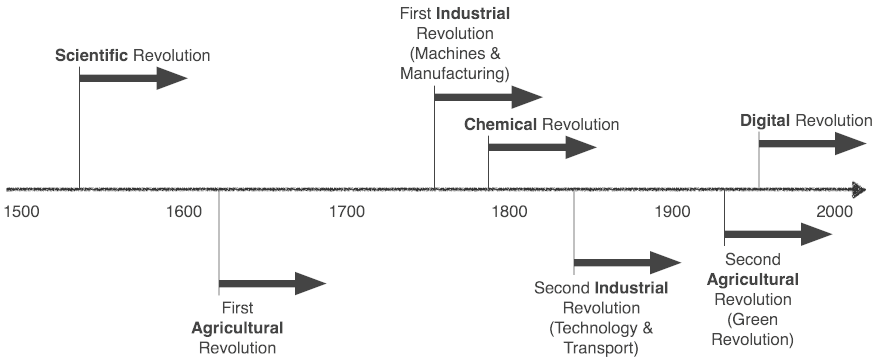

That said, genuine change on a scale that can impact the whole of humanity is nothing new either: it happens more often than one may think, and increasingly more regularly than it did in the past. So much so, in fact, that there is a specific term semi-reserved for historical context: revolution. Not “revolution” in the political sense, but one that represents a documented epoch during which time human civilisation was elevated to another level, primarily through significant advancement in an eponymous area of technology.

The most well-known of these is of course the “Industrial Revolution”, of which there have been at least two in Europe alone. However, since the end of the Renaissance there has been a new technological revolution at least every one hundred years, and over time they have increased in frequency (and, more recently, concurrency).

Technological revolutions are more common than you may think

What constitutes a revolution is also an interesting matter for debate, though (as always) having a Wikipedia article dedicated to it is at least a good starting point. However, there are two factors that could be objectively considered common:

- Dramatic and far-reaching impact on human society

- New avenues of innovation and creativity being opened and exploited

These points (broad though they are) sound disarmingly familiar to the high-level definition of “Digital Transformation” from above. So, perhaps the concepts it encapsulates are even less original than previously thought.

What does this mean with regards to where software development is today? Specifically, what does it mean in the context of the technological revolution we are currently experiencing: the “Digital Revolution”?

To my mind, the answer is simple: the concept of “Digital Transformation” is merely a reiteration of the change and innovation in which the software industry has been participating since the invention of the first digital transistor. In some ways the term is largely redundant: can anyone demonstrate a time when digital technology has not been transforming the world? If anything, dramatic systemic change should be considered business-as-usual for software development.

What are the challenges?

What does it say about our industry, caught in the middle of a revolution, when the mere act of instigating change is somehow considered marketable? That in itself is an implicit indictment of how the world perceives us, and (more tellingly) how we perceive ourselves. For one thing, we are one of just a couple of disciplines that are considered to be “in crisis” due to lack of quality, reliability, maintainability, cost efficiency, etc., for the products and services we provide. Not only that, but the moniker has been with us for more than half a century (1968 to be precise — once again, Wikipedia FTW).

So what went wrong? When assimilating positive change within an industry is demonstrably more difficult than it should be, it tends to be symptomatic of deeper problems. Rather surprisingly, these often include: lack of manageable standard operating procedures or established best practices.

It may seem counterintuitive, but stable working processes can actually drive business agility and the ability to accommodate change reliably — they provide foundations upon which innovators can let their imaginations run free, a soft landing when experiments fail, and accommodating frameworks when they succeed. Toyota realised this in the late 1940s when they began establishing the Toyota Production System (arguably the spiritual progenitor of modern Agile methodologies). By making stability the foundation of their business practices, within a generation the company was transformed from a bit-player in the Japanese market to the single most successful automobile manufacturer on the planet.

As practitioners and technologists, most of us will have at least some experience of working in an environment where real technological change seems to happen at a glacial pace, and for some of us this will sadly be the norm; here I distinguish “real” change as being something meaningful, productive or concordant with adding value, as opposed to superficial window-dressing or attempting to initiate change through uncritical, dogmatic witchdoctory (yes, Agile I am looking at you — just kidding, I love you really).

What does it all mean?

If the notion of “Digital Transformation” really is redundant in software development, then the issue it was intended to address (i.e. present day shortcomings in the way a company manages digital technology) could be construed as a distraction, whether by choice or by accident. It is often marketed as a silver bullet to solve the problems in digital strategy by catalysing necessary change, but let us assume for a moment that change itself is a natural consequence of business-as-usual in the software industry. If transformation is the target, and change is inevitable, then perhaps the real weakness is in how companies themselves conduct business-as-usual; and (given the fact the “software crisis” is still alive and well) that is probably not an unreasonable conclusion to draw in many cases.

The lack of consistent best practices for quality assurance, code reviews, integration testing, rapid prototyping, test strategy, risk assessment, project design, architecture, version control, release management, instrumentation, user experience, or documentation across companies says more about the state of our craft than most would care to admit. How can this still be the case after more than 50 years of trial and error? Perhaps because a frighteningly large number of software engineers have a hard time accepting good ideas that they did not think of themselves (a derivative of “Not Invented Here” syndrome); individually we feel the need to put our personal signature on answers to problems that were solved years ago, and thus continue the cycle of wheel-reinvention — frantically turning whilst somehow managing to remain stationary. Design Patterns are the closest thing we have to universally agreed standards, but even they can only get you so far.

Few would argue that software development does not still suffer endemic problems, whether they are: projects running over-budget, projects running over-time, low quality deliverables, unmet requirements, or simply projects never reaching production. Despite this, the basal pace of technological change has for a long time shielded us from the cold truth that a large fraction of our industry still struggles to deliver projects on time, on budget, and/or on quality, without cutting corners. The fact that we are consistently failing to fulfil these basic criteria should have us standing back in astonished self-reflection, but that never happens because we know that in 12 months time we will be working on new paradigms, stacks, and frameworks, whose sheer novelty provides us with renewed reasons as to why we will always need “just a few more sprints”.

What can we do about it?

I was a computational biologist in a previous life, and I often find myself revisiting my observations on biological systems as a guide to how humans tend to behave, even without realising it. As a species we are often so impressed by ourselves that we forget that nature has been winning at this same game for much longer than we could ever imagine. It is truly amazing what can be achieved with a billion year schedule, globally redundant resources, and an endless supply of expendable labour!

Whatever your take on the natural world, a sad fact of life is that change is difficult, and few ever get it right the first time. However, change is also necessary in order to avoid demise or stagnation. Even at the coalface of software development the same holds true: many cling on to the idea that making changes to software must be easy because (unlike other fields of engineering) we are not carving stone or welding metal. They either forget or ignore the fact that, in all cerebrally creative disciplines, any degree of change requires great care regardless of the substrate. If making changes to software really were a trivial matter then we would have no need for regression testing, contingency planning, or even the term “brownfield”.

Despite all this, the irony is that systemic change is unavoidable: for better or for worse, change will happen inside and outside your company whether you like it or not, and that makes harnessing the benefits of the change around you a necessary part of everyday practice for organisations who wish to survive. By resisting change for fear of the unknown, we risk being left behind when the wave finally passes.

Unfortunately, systemic change is also slow: any attempt to break homeostasis in the short-term will inevitably be met with resistance spawned from either strategy, culture, people, process, technology, or analysis (but mostly people — never underestimate the nullifying power of ego). Even something as basic as task estimation can rapidly descend into the realms of cargo-cult and wishful thinking without careful oversight; but attempts to suggest an alternative are routinely resisted with claims that anything else would be “too difficult” or “more work”, as if these were somehow valid excuses for lack of improvement. Just because something is difficult to do well is no reason to avoid trying.

A word of warning

For any change to succeed it must, above all, be simple to assimilate. Even if the reasoning and theory behind it is complex, the implementation must be easy enough for anyone to grasp; not everyone needs (or wants) to understand why something is happening, and sometimes no one will understand why something is happening! But that is okay: development teams may be smart, but they already have a difficult job to do; indenturing them with false burdens, with no demonstrable value, does nobody any favours. Any individual ant may not know about the machinations of its colony, but by doing its job well and in harmony with its cohort it can fell trees, build bridges, and construct underground cities of astonishing intricacy with just a simple set of rules to follow.

Similarly, successful change is coordinated rather than controlled, especially when it consists of highly intelligent actors. You would be hard-pressed to try and control any group like that. Some liken managing a development team to herding cats — I prefer likening it to herding attention-deficient cats with their own egos, anxieties, characters, passions, ambitions, insecurities, aptitudes, and talents, all whilst simultaneously shielding them from a pack of circling pitbulls. I still have the marks to show for it.

If these observations are correct, then it quickly becomes clear that complex change can only begin from the ground up, and must be built from a library of small, stable, and repeatable steps. While it cannot always be predicted, macroscopic change can at least be steered through targeted adjustments and careful monitoring of progress. Only through these micro-level alterations can reliable macro-level changes emerge, and once they do emerge they will self-sustain (ask any ship’s captain when they try to change course). Top-down proclamations or transformations through diktat rarely gain traction, let alone success.

Finally, and above all, we (the software development industry) must revisit the basics and master those that can help address the problems of today before worrying about next year’s big trend, otherwise those problems will simply continue to persist beneath the veneer of those shiny new toys. Basics like: proper system design (not just lip service); proper project design (no more “wet fingers in the air”); no more compromise on quality (better development, more testing, system-wide quality assurance, zero tolerance for defects); transparency with customers (being prepared to say “no” to unrealistic expectations); gauging risk and being ready for it (not just hoping it will never happen); and continuous improvement (acknowledging mistakes and embracing them as chances to learn).

Personally, if could wave a magic wand, and pick just one thing from my mental wish list to transform overnight, it would be to stop treating testing like a second class facet of software creation. No development manager in the history of anything has ever slapped their forehead in despair at the thought of having too many testers on their team!

Final Thoughts

Tolstoy once said:

Happy families are all alike; each unhappy family is unhappy in its own way.

To put it another way: in any task there may be a million of ways to get things wrong, but only a handful of ways to get things right. This is especially true of thought-intensive disciplines like software. While there may be some hitherto undiscovered best practices the industry could one day universally adopt, for now the problem is incumbent upon each of us to solve for our own situation. The first step is to acknowledge that the problem exists. At the moment we are still perpetually captivated by the brilliant new technologies coming over the horizon, and the challenges they will bring, that we neglect the need to master the basics of our craft for the challenges we already face.

If we truly want to be the best we can be, then perhaps the first step is to swallow our pride and be willing to admit that we cannot hope to revolutionise our craft until we can reliably learn, adapt, and (above all) teach how to fulfil the basic responsibilities expected of true professionals.